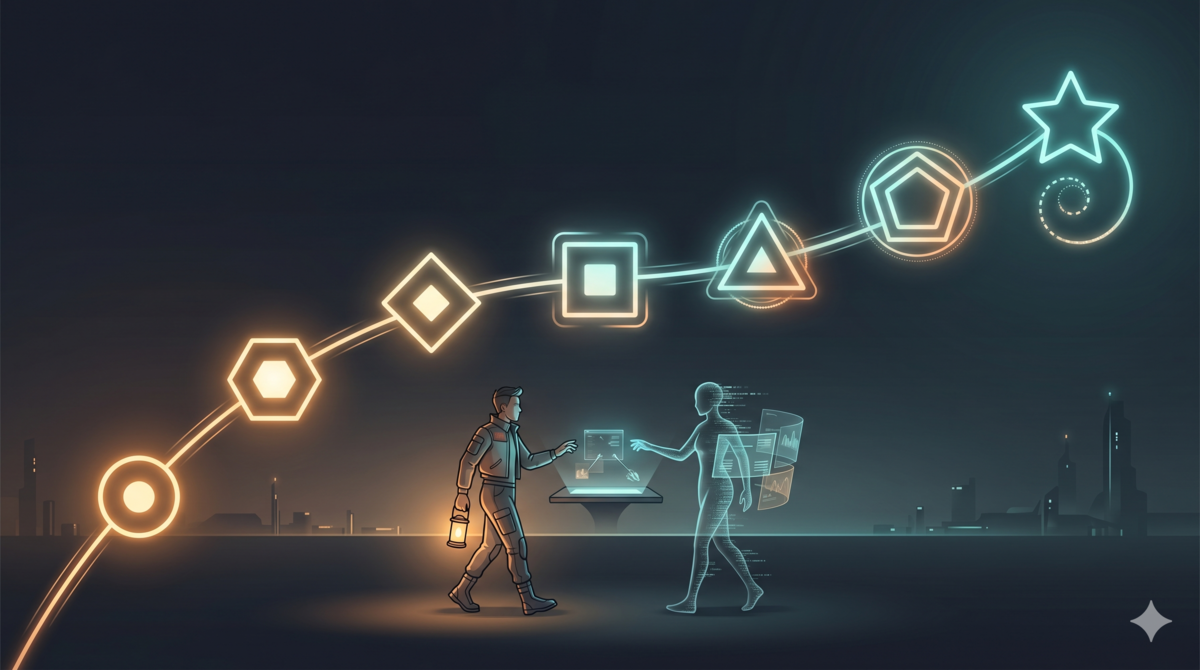

Looking Back: Seven Human-AI Collaboration Patterns in the Aristotle Project

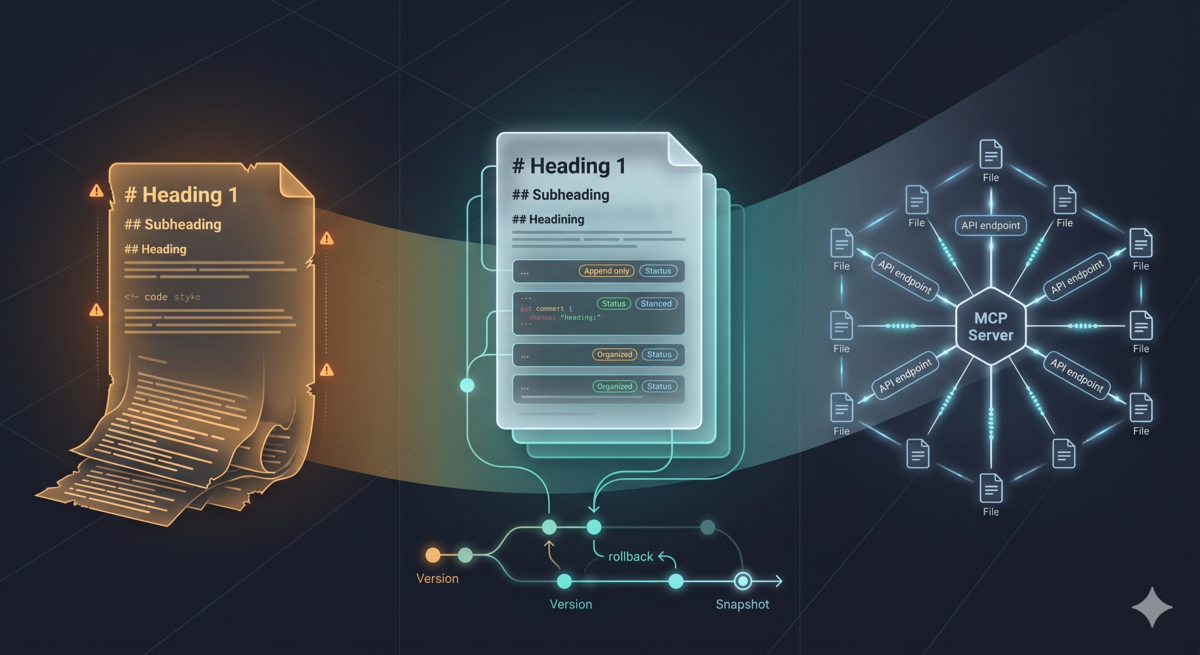

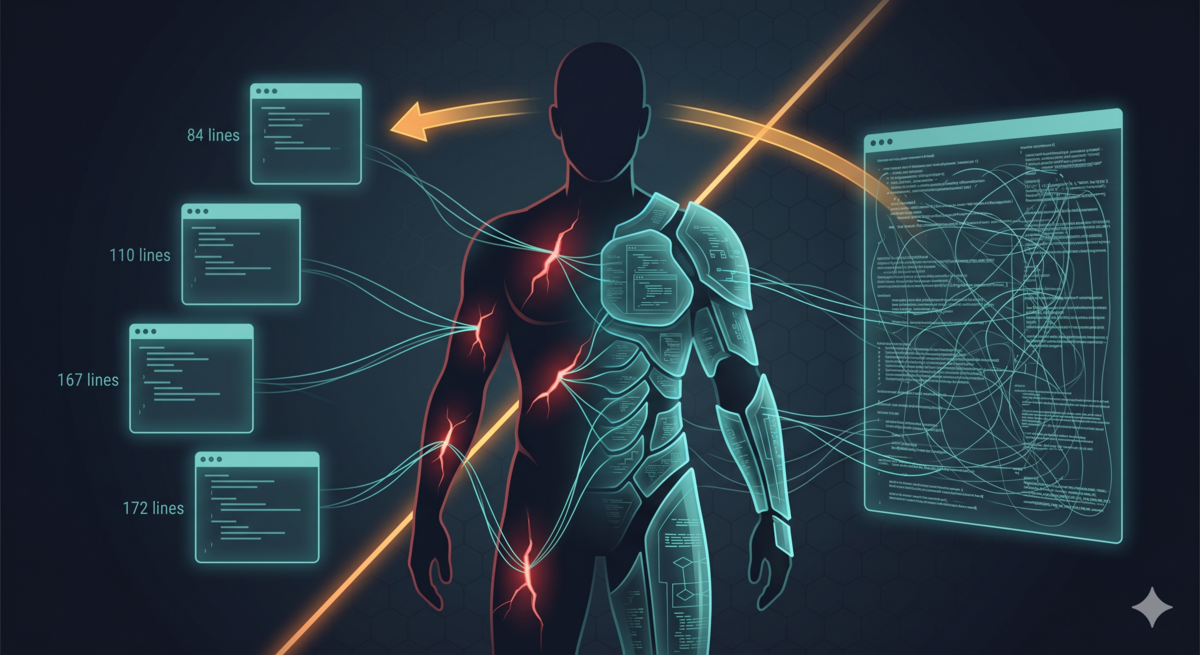

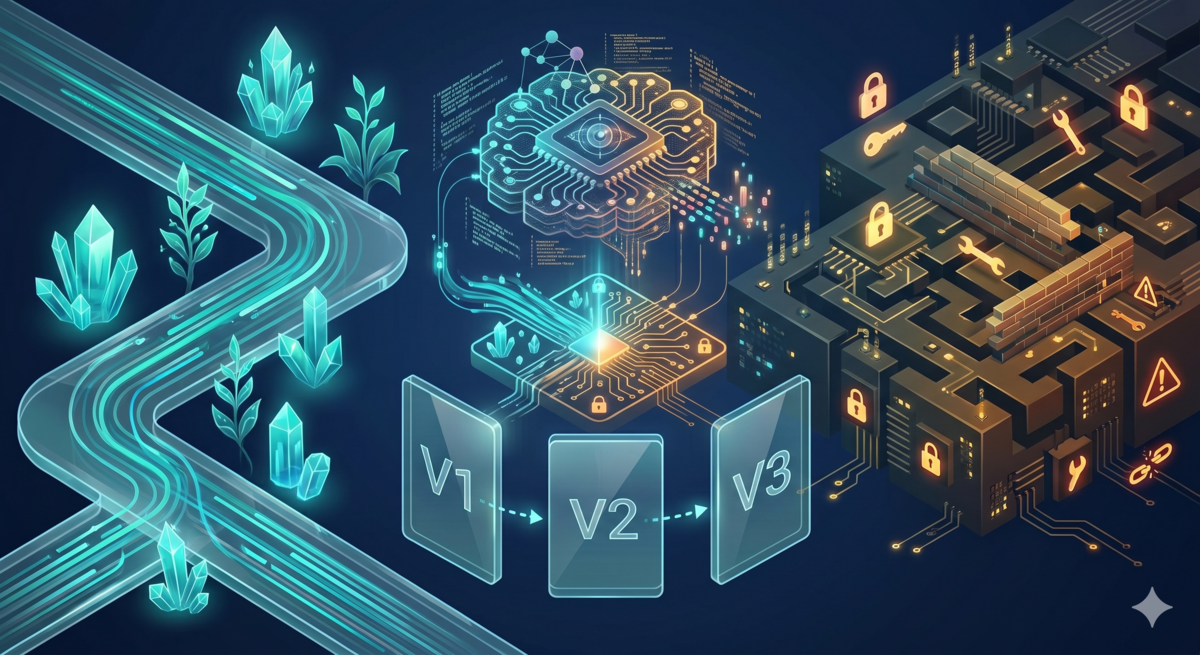

Five articles in. Time to step back and look at the path itself. Aristotle: Teaching AI to Reflect on Its Mistakes covered the design philosophy and initial implementation. claude-code-reflect: Same Metacognition, Different Soil told the story of porting across platforms. Trust Boundaries: One Idea, Two Systems proposed a trust tiering model. From Scars to Armor: Harness Engineering in Practice validated the theory through refactoring. A Markdown’s Three Lives: From Static Rules to a Git-Backed MCP Server evolved the rule storage from append-only to the GEAR protocol. ...