OMO vs SLIM: I Switched Plugins to Save Tokens. Here's What Actually Happened.

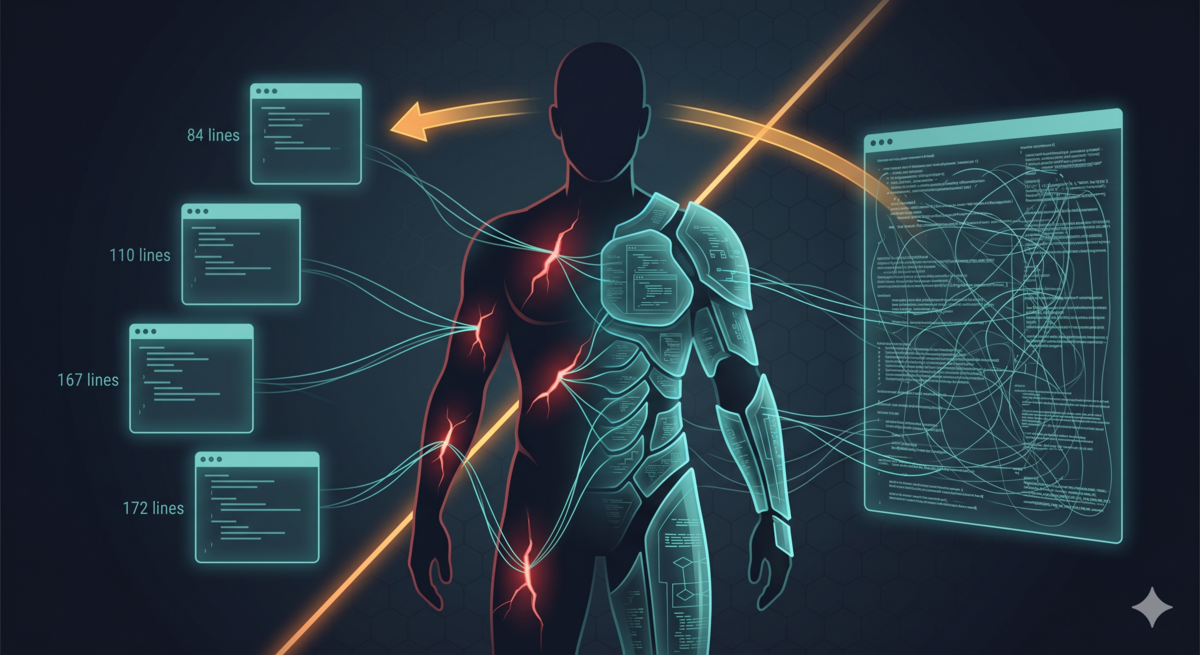

TL;DR: I switched from OMO to SLIM and ran it for 13 days. Average Tokens per message dropped 3.7% — practically flat. Broken down by task type: coding flat, writing +61%, review -53%, debug +121% (unreliable, tiny sample). Aristotle dropped 68%, but the main cause was an architecture rewrite, not the plugin. “Saving tokens” is not a global fact. It’s local. The real differences are in experience and architecture choices, not in token counts. ...